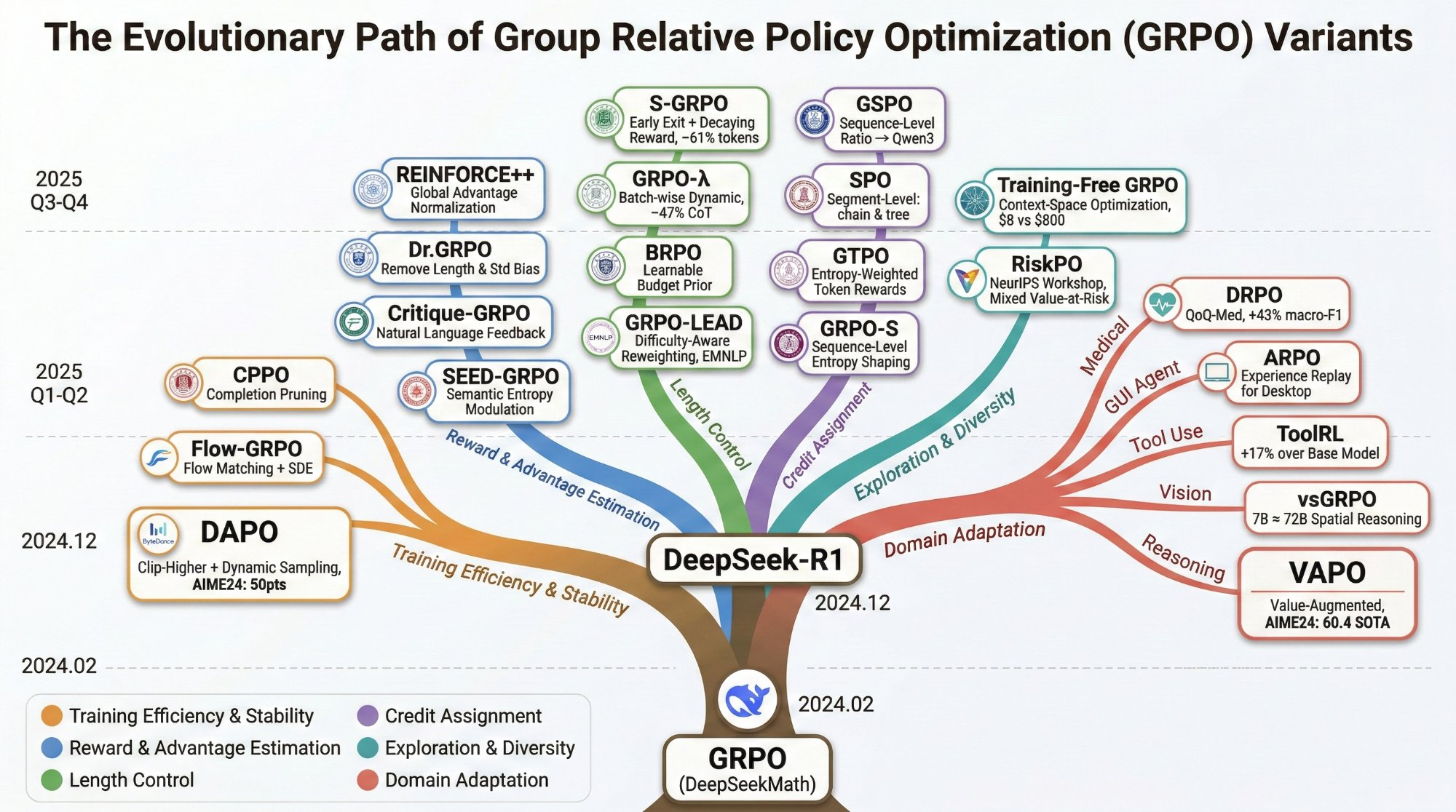

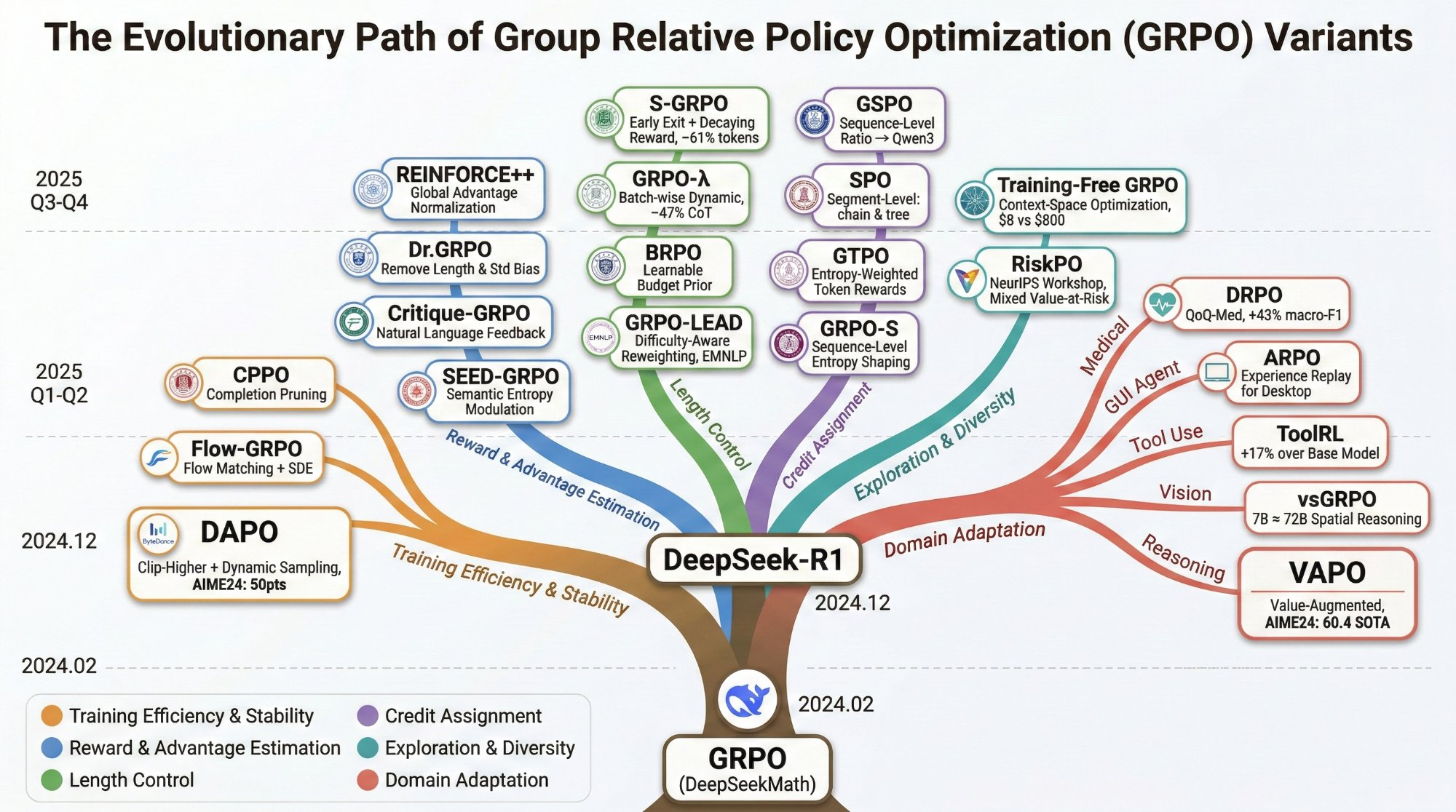

Evolution of GRPO Variants

From DeepSeekMath (2024.02) to 20+ variants across six research directions

TL;DR: The first comprehensive survey of the GRPO variant ecosystem

Three main contributions of this survey

Problem-oriented (6 dimensions) and technique-oriented classification of 20+ GRPO variants, linked by a method-dimension matrix revealing cross-cutting patterns across training stability, advantage estimation, length control, credit assignment, exploration, and domain adaptation.

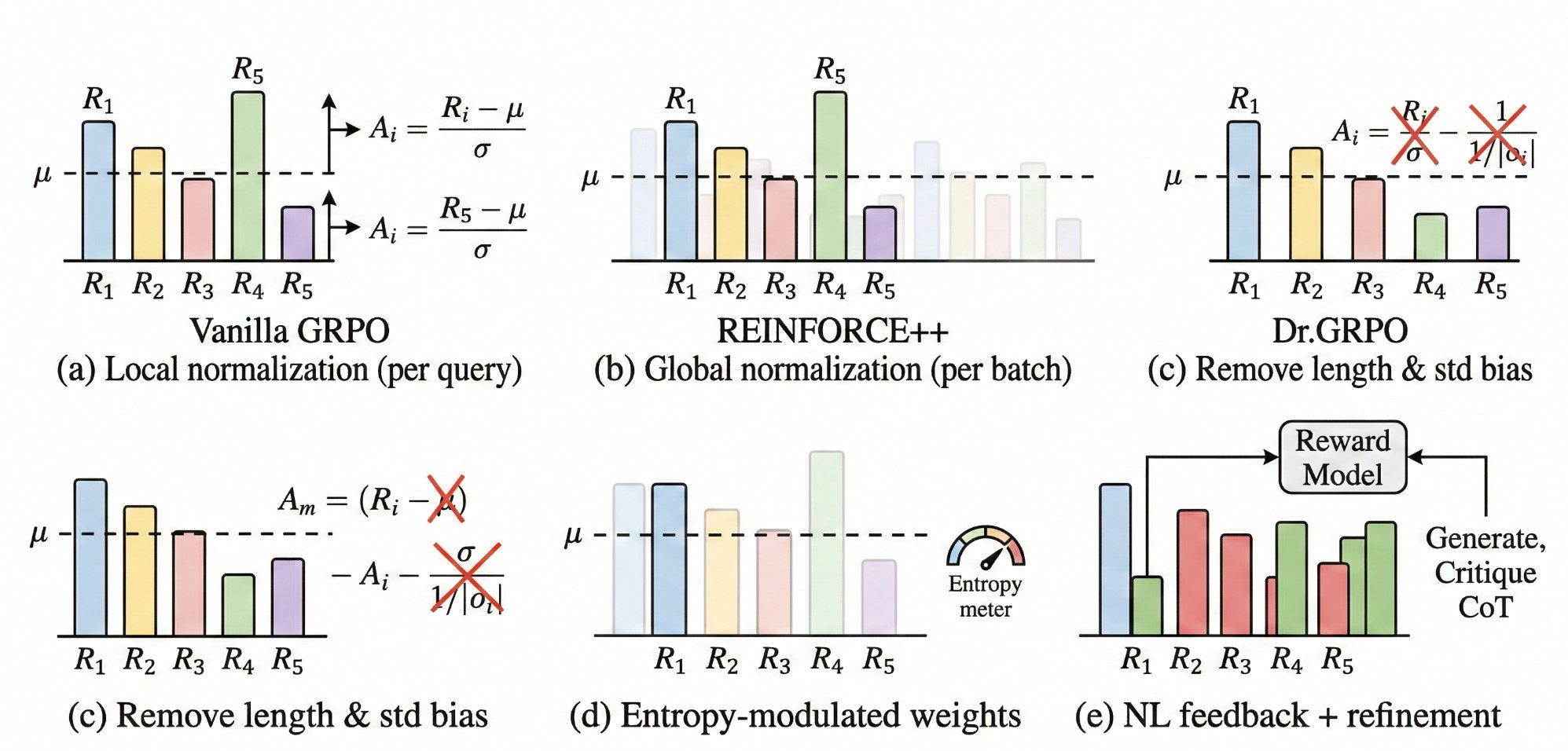

A unified (b, s) framework connecting GRPO's group normalization to self-normalized importance sampling (SNIS), with rigorous bias-variance-compute trade-off analysis for Vanilla GRPO, REINFORCE++, Dr.GRPO, and RLOO under Gaussian reward assumptions.

Horizontal comparisons under comparable conditions (same base model and benchmarks), explicit catalogue of negative results and failure modes, technique combination conflicts, and per-method reliability assessments.

From DeepSeekMath (2024.02) to 20+ variants across six research directions

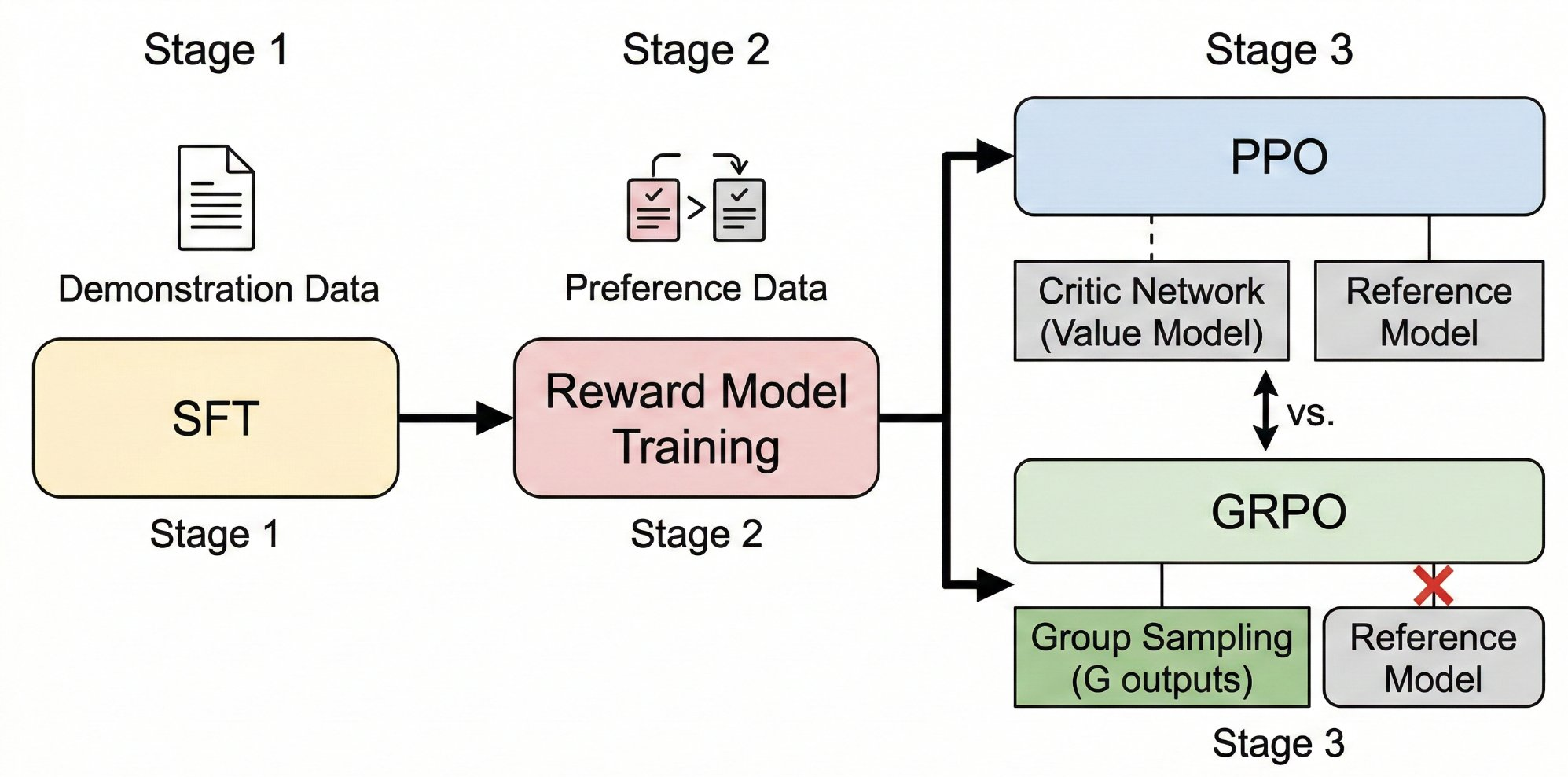

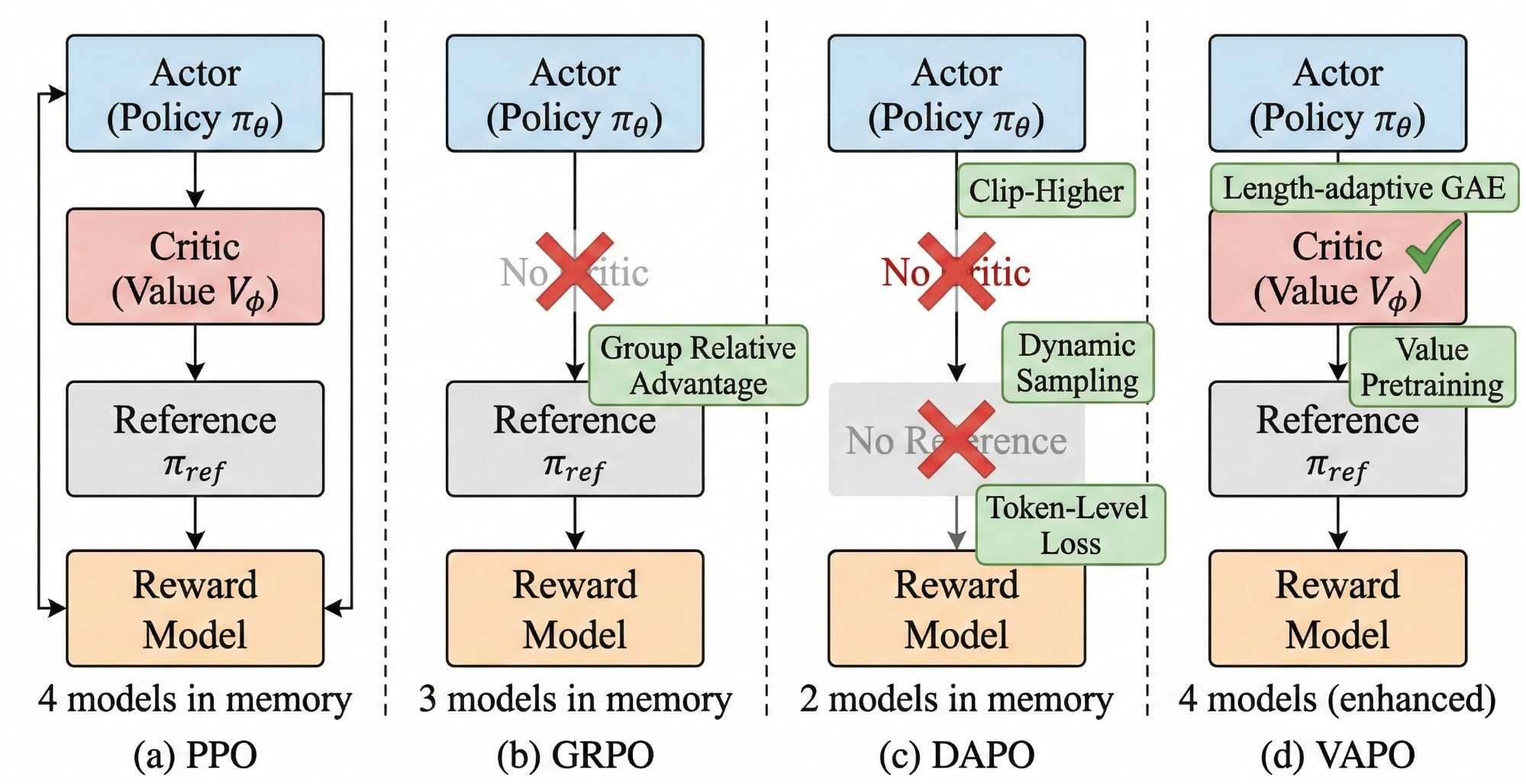

RLHF pipeline and the GRPO algorithm

Six-dimensional problem-oriented classification

| Category | Core Issue | Representative Methods |

|---|---|---|

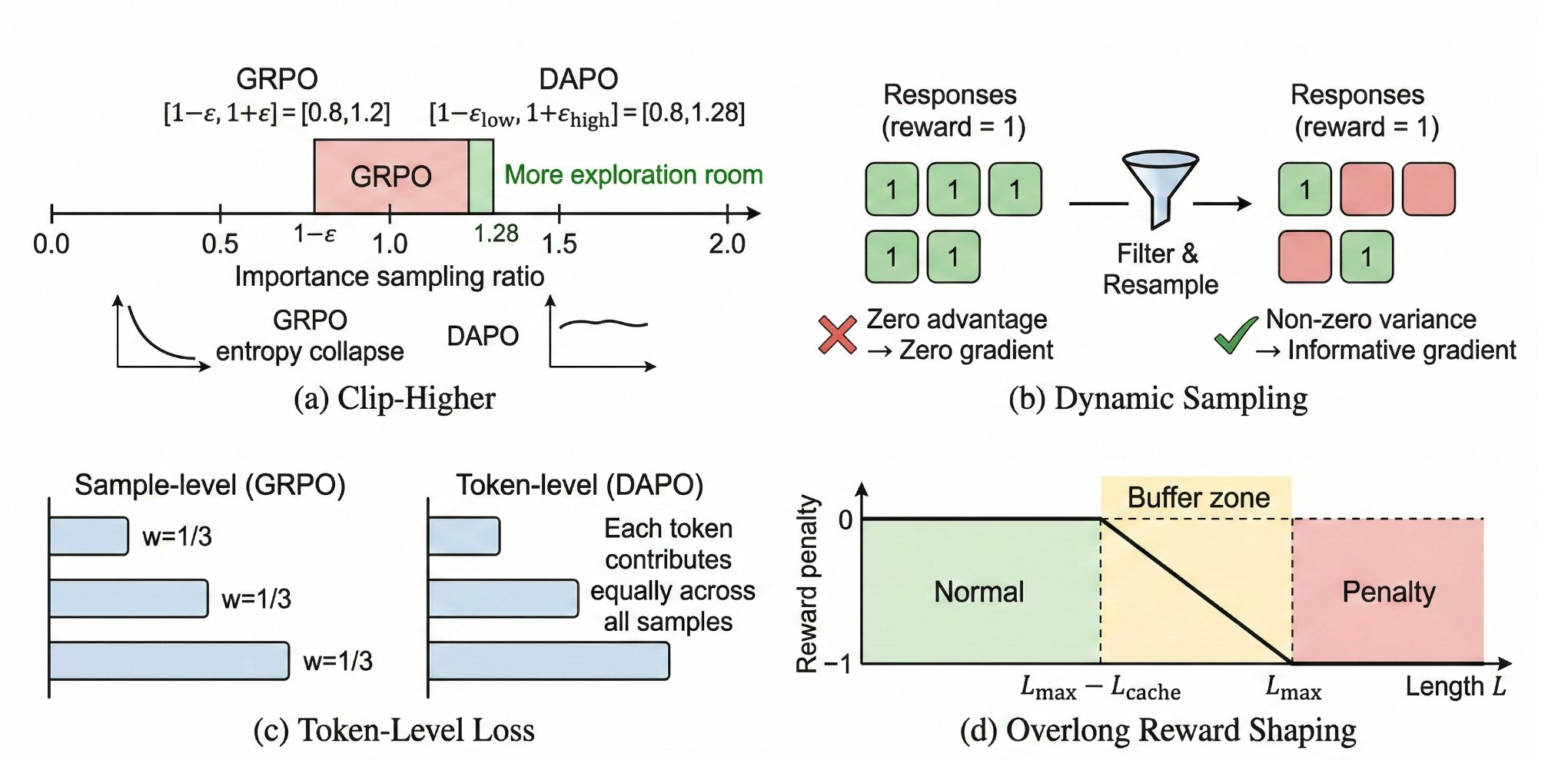

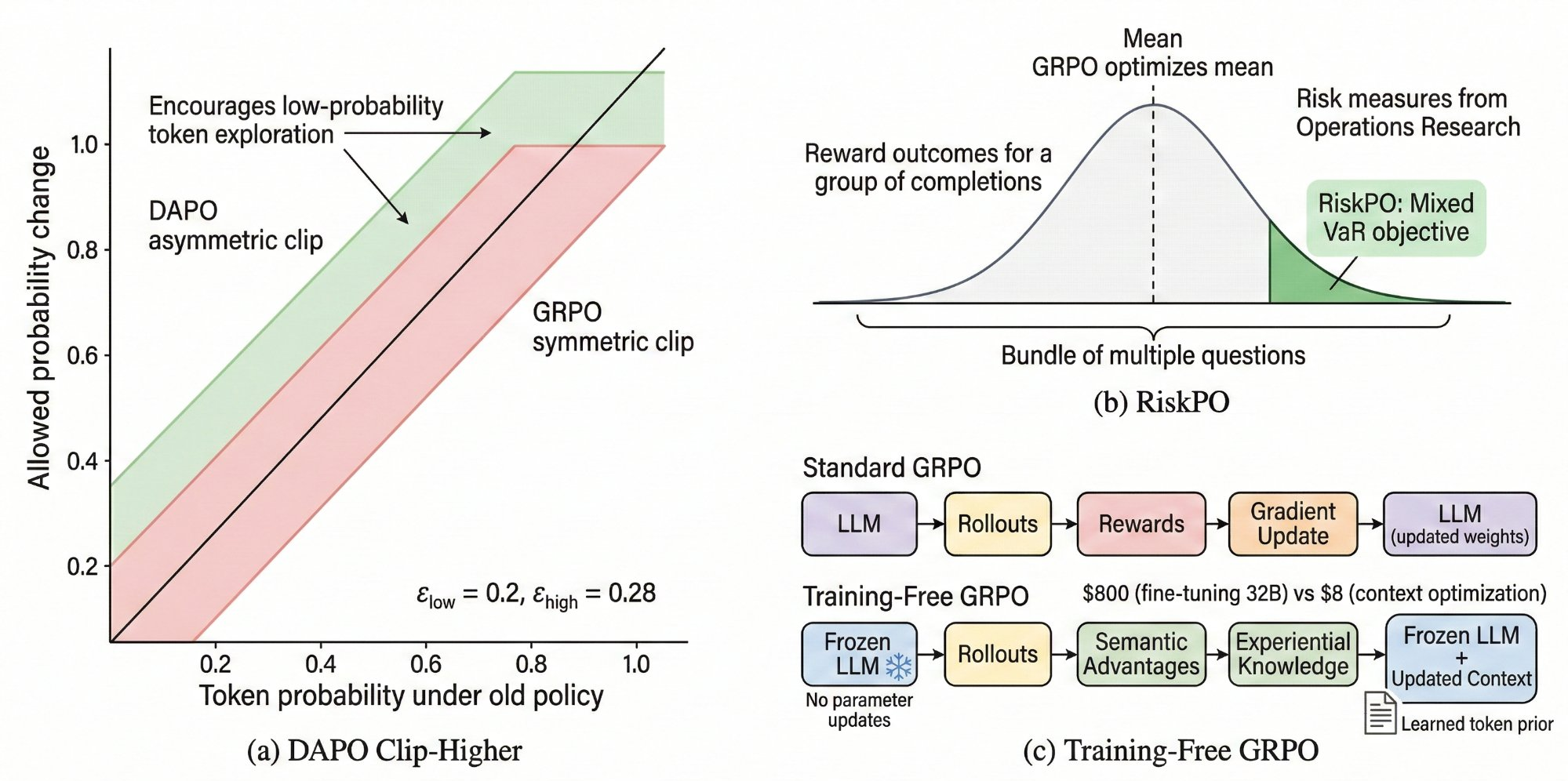

| Training Efficiency & Stability | High computational cost, entropy collapse | CPPO, Flow-GRPO, DAPO |

| Reward & Advantage Estimation | Biased advantage, sparse rewards | REINFORCE++, Dr.GRPO, Critique-GRPO, NTHR, SEED-GRPO |

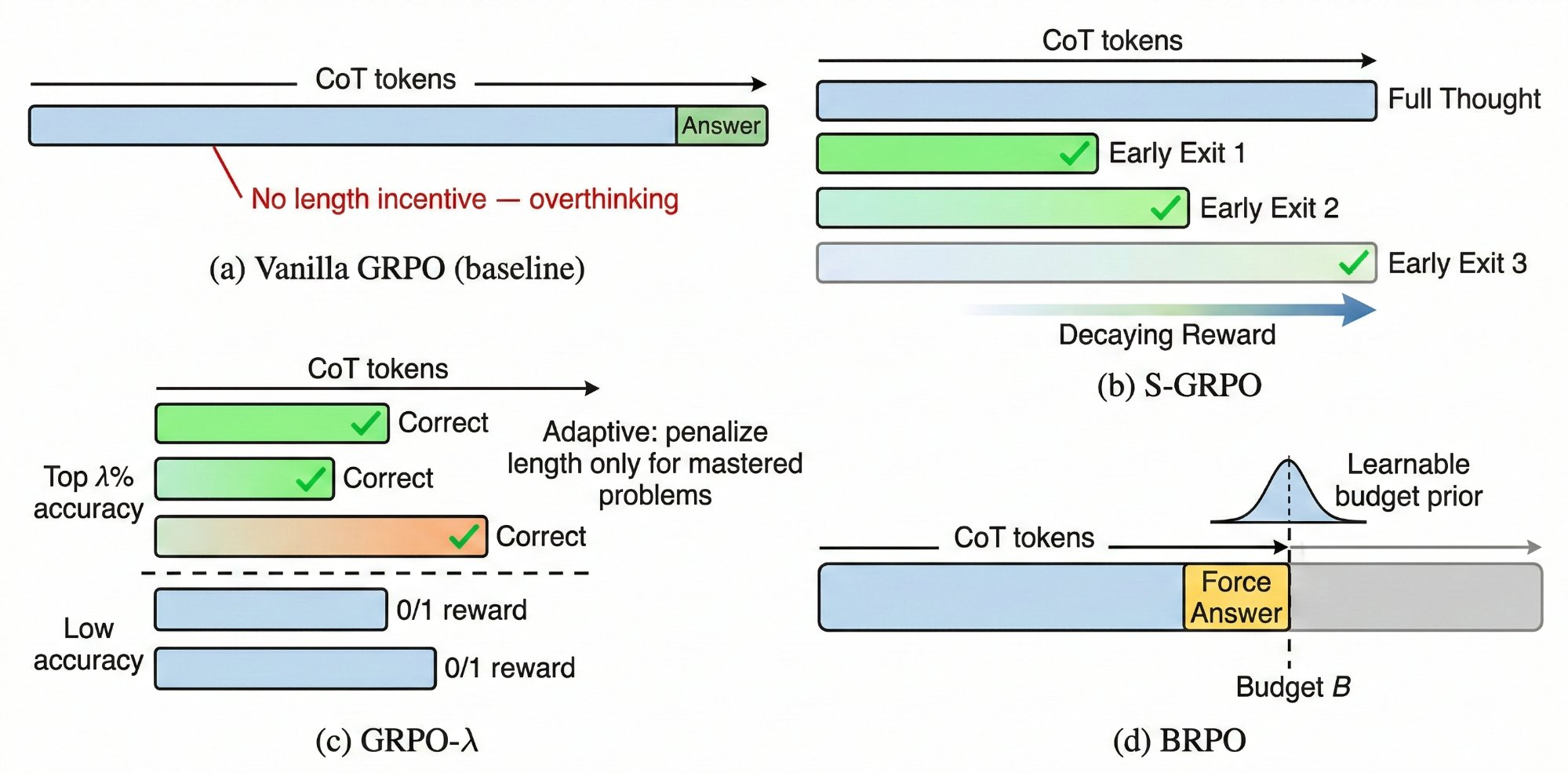

| Length Control | Overthinking, verbose CoT | S-GRPO, GRPO-λ, BRPO, GRPO-LEAD |

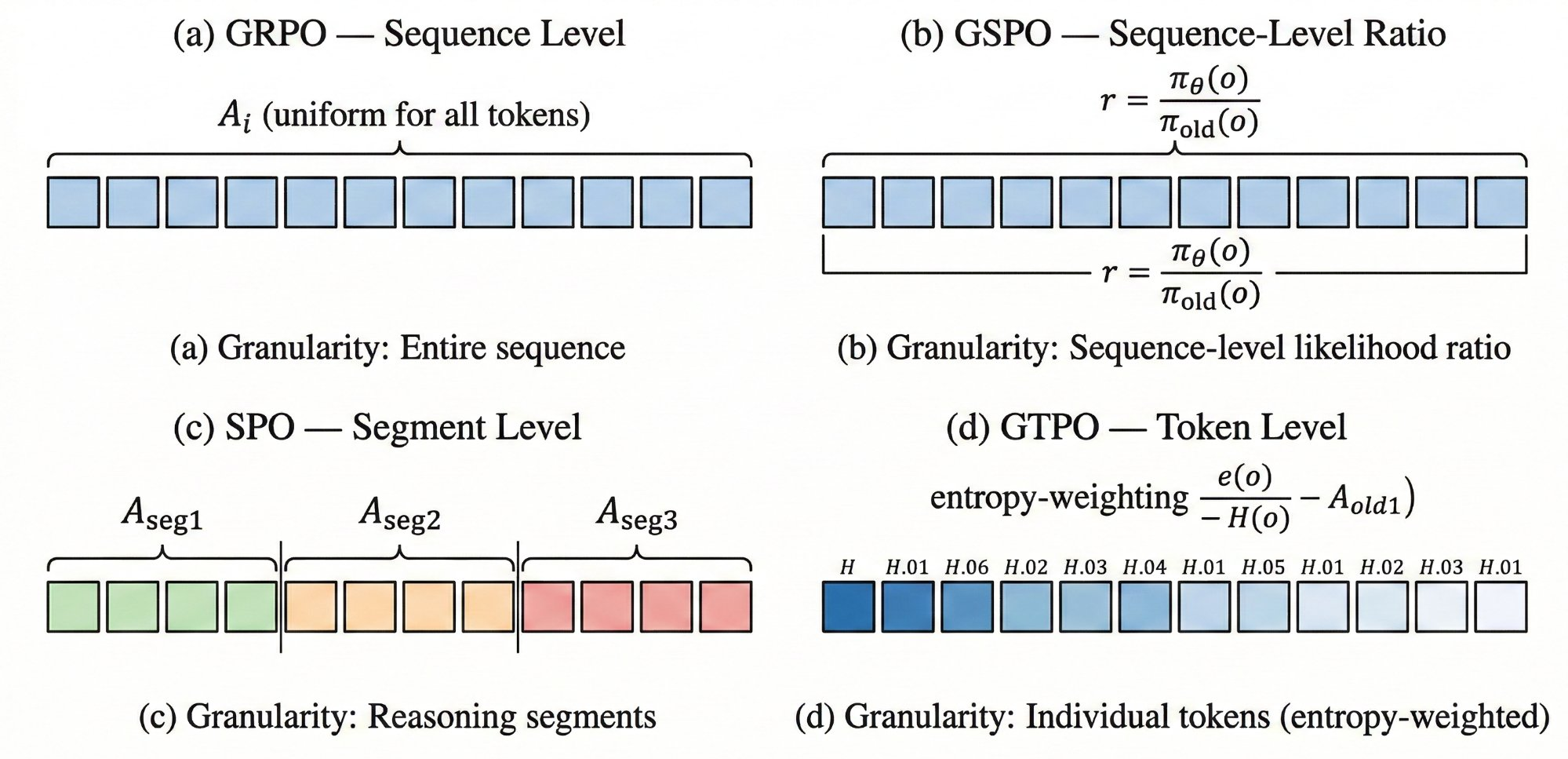

| Credit Assignment | Coarse-grained uniform assignment | GSPO, SPO, GTPO, GRPO-S |

| Exploration & Diversity | Limited exploration, entropy collapse | DAPO Clip-Higher, RiskPO, Training-Free GRPO, F-GRPO |

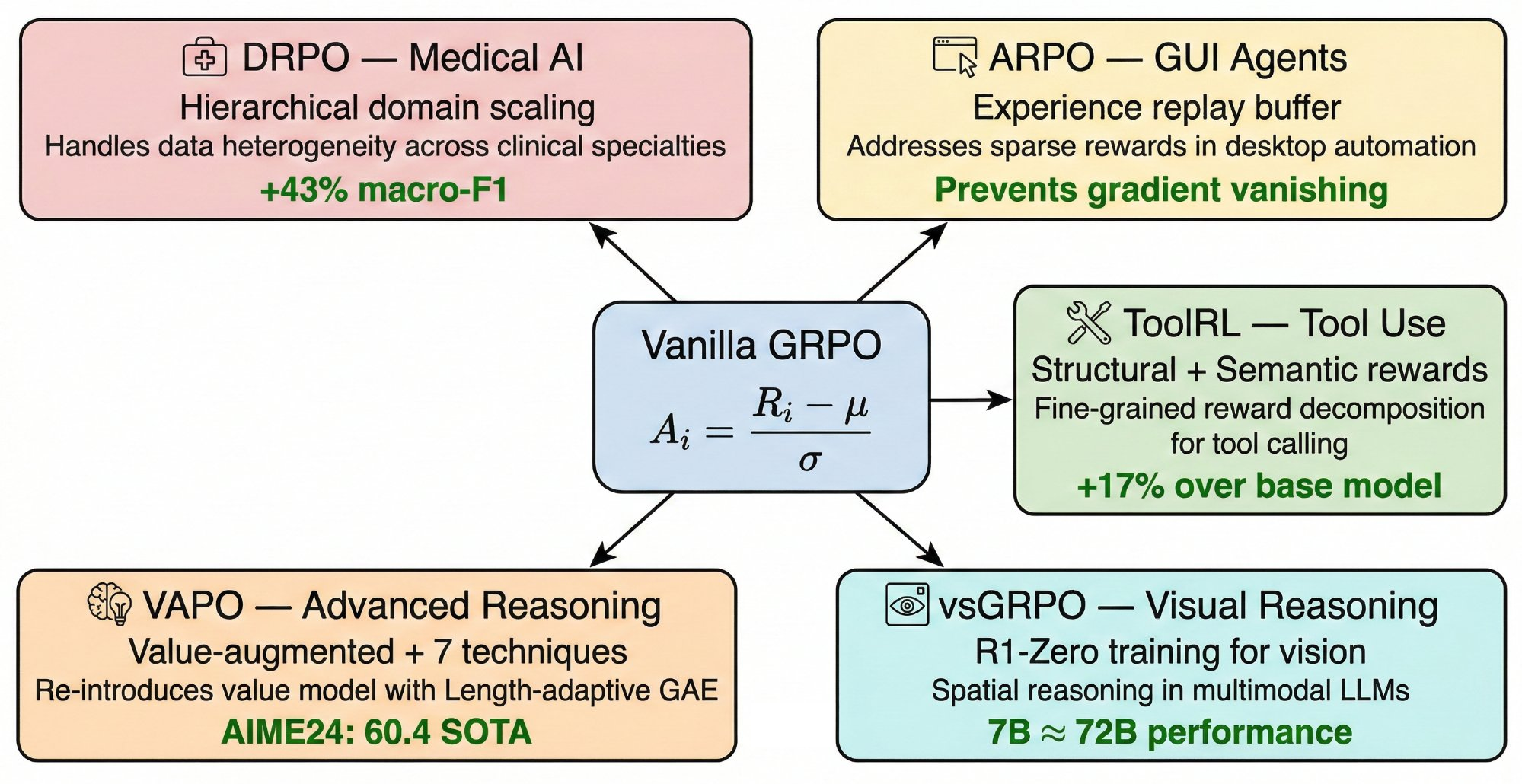

| Domain Adaptation | Task-specific challenges | DRPO, ARPO, ToolRL, vsGRPO, VAPO |

Visual comparison of techniques across all six dimensions

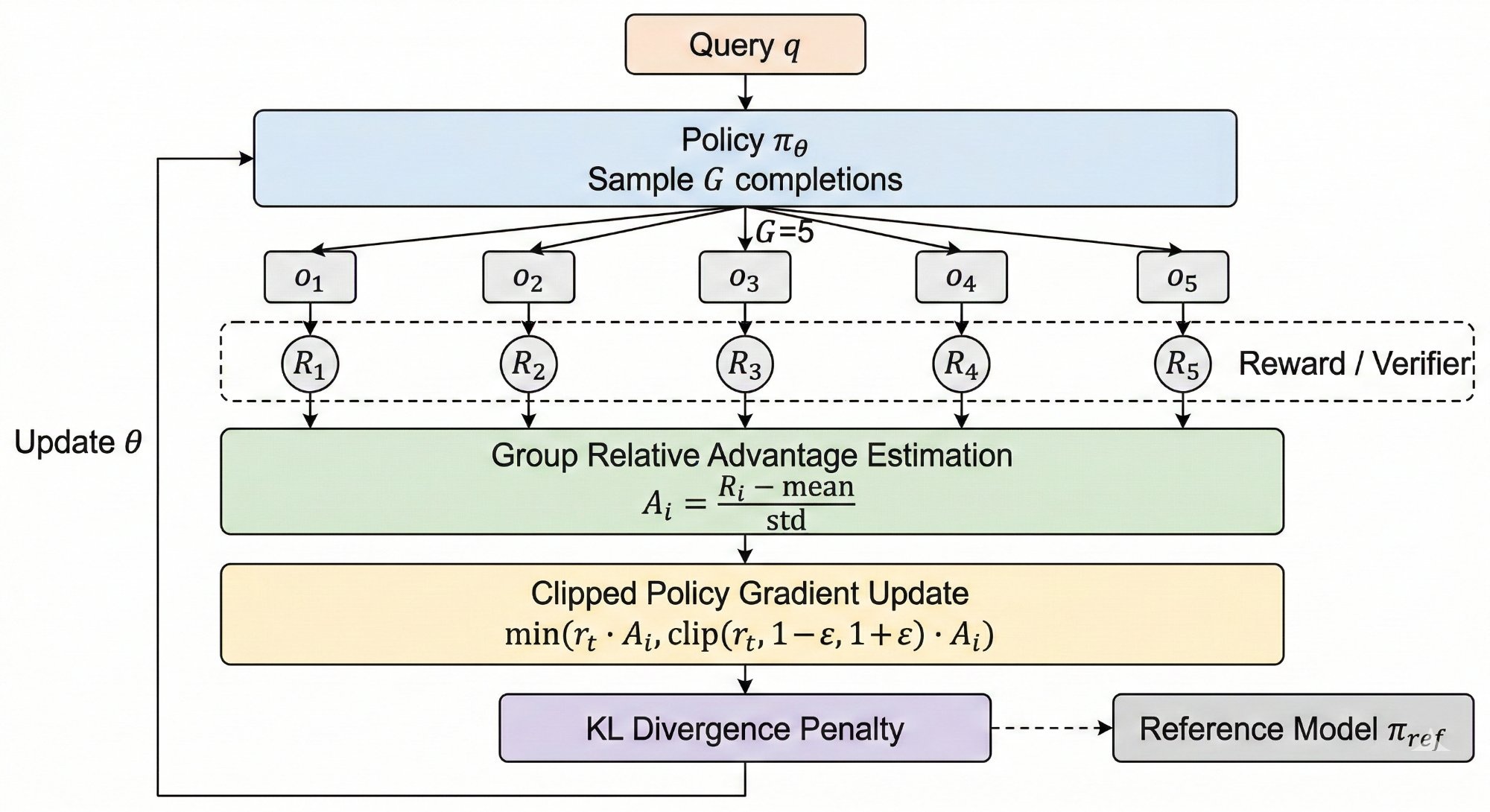

The (b, s) framework for advantage normalization

Ai(b, s) = (R(q, oi) − b) / s

| Variant | Baseline b | Scale s | Bias | Variance |

|---|---|---|---|---|

| Vanilla GRPO | Local mean | Local std | O(1/G) | O(σq-2/G) |

| REINFORCE++ | Global mean | Global std | O(1/N) | O(σq-2/G) |

| Dr.GRPO | Local mean | 1 (none) | 0 | O(σq2/G) |

| RLOO | Leave-one-out mean | 1 (none) | 0 | O(σq2/G) |

Performance and reliability of GRPO variants

| Method | Base Model | Critic | Ref. Model | AIME'24 | MATH-500 | Status | Reliability |

|---|---|---|---|---|---|---|---|

| Vanilla GRPO | Qwen2.5-7B | No | Yes | — | — | Pub. | ★★★ |

| CPPO | Qwen2.5-7B | No | Yes | — | — | arXiv | ★ |

| DAPO | Qwen2.5-32B | No | No | 50.0 | — | Pub. | ★★★ |

| REINFORCE++ | various | No | Yes | — | — | arXiv | ★★ |

| Dr.GRPO | Qwen2.5-7B | No | Yes | 43.3 | — | Pub. | ★★ |

| SEED-GRPO | Qwen2.5-7B | No | Yes | 56.7 | 83.4 | arXiv | ★ |

| S-GRPO | Qwen2.5-7B | No | Yes | — | ↑6.1% | Pub. | ★ |

| GRPO-LEAD | Qwen2.5-14B | No | Yes | — | — | Pub. | ★★ |

| GSPO | Qwen2.5-32B | No | Yes | — | — | arXiv | ★★★ |

| RiskPO | various | No | Yes | — | — | Pub. | ★★ |

| VAPO | Qwen2.5-32B | Yes | Yes | 60.4 | — | arXiv | ★★ |

★★★ = Independently reproduced/adopted | ★★ = Multiple evaluations | ★ = Single-team self-report. Different methods use different training data and hyperparameters; results indicate general capability rather than strict rankings.

Cross-cutting contributions of each method

| Method | Efficiency | Reward/Adv. | Length | Credit | Exploration | Domain |

|---|---|---|---|---|---|---|

| CPPO | ● | |||||

| Flow-GRPO | ● | ○ | ||||

| DAPO | ● | ○ | ○ | ○ | ○ | |

| REINFORCE++ | ● | |||||

| Dr.GRPO | ● | ○ | ○ | |||

| Critique-GRPO | ● | ○ | ||||

| NTHR | ● | ○ | ||||

| SEED-GRPO | ● | |||||

| S-GRPO | ● | |||||

| GRPO-λ | ● | |||||

| BRPO | ● | |||||

| GRPO-LEAD | ○ | ● | ○ | |||

| GSPO | ● | |||||

| SPO | ● | |||||

| GTPO / GRPO-S | ● | |||||

| DAPO Clip-Higher | ○ | ● | ||||

| RiskPO | ● | |||||

| Training-Free GRPO | ● | |||||

| F-GRPO | ○ | ● | ||||

| DRPO | ● | |||||

| ARPO | ○ | ● | ||||

| ToolRL | ○ | ● | ||||

| vsGRPO | ● | |||||

| VAPO | ○ | ○ | ○ | ○ | ● |

● Primary contribution ○ Secondary contribution

If you find this survey useful, please cite our paper